Questions are growing around Grammarly using authors’ identities in its AI-powered writing suggestions without clear permission. The company recently acknowledged the criticism but stopped short of apologizing or removing the feature. Instead, affected writers must manually opt out if they do not want their names attached to AI-generated guidance. The situation has sparked wider debate about consent, transparency, and how artificial intelligence uses real experts to boost credibility.

For many writers, the discovery came as a surprise. Their names appeared inside AI writing suggestions designed to make Grammarly’s advice seem more authoritative. Yet the individuals themselves had never agreed to participate in the system.

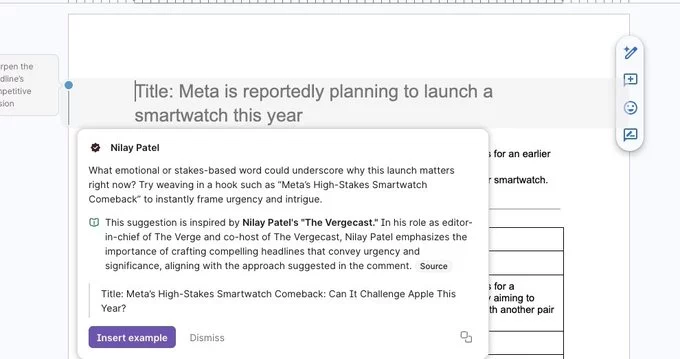

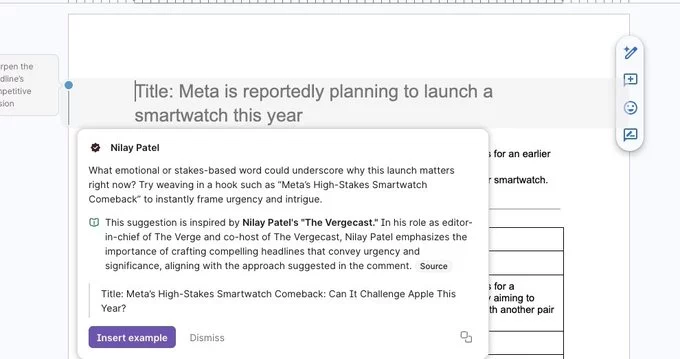

The controversy centers on a feature designed to highlight expert opinions during the editing process. Within the tool, AI suggestions may appear alongside references to recognized authors or editors.

According to the company, the feature was meant to help users discover valuable perspectives from experienced voices. When the AI recommends a change, the interface may display an expert’s name as a form of contextual guidance.

However, critics argue the approach blurs the line between genuine expert commentary and automated AI output. Because the suggestions are generated by artificial intelligence, attaching a real person’s name can make them appear endorsed by that individual.

As a result, the feature has raised questions about transparency and accountability in AI-assisted writing.

The biggest concern involves consent. Several writers discovered their names were being used inside AI suggestions without any notification beforehand.

For professionals who rely on reputation and credibility, this creates a complicated situation. Readers and users may assume the expert directly approved or authored the advice presented by the AI. In reality, those individuals may have had no involvement with the recommendation.

This disconnect has fueled frustration among journalists, editors, and authors who suddenly found themselves linked to AI-generated content they never reviewed.

Many say the practice risks misrepresenting their expertise.

Following public criticism, Grammarly addressed the issue. The company acknowledged the feedback and suggested the product experience could be improved.

Instead of removing the system, the company introduced an opt-out process. Writers who do not want their identities used can send a request asking to be removed from the feature.

The company explained that the goal of the tool is to help users discover influential ideas and perspectives while writing. It also stated that giving experts more control over how their names appear will improve the experience.

Still, the core feature remains active.

The debate highlights a growing challenge across the AI industry: credibility. As generative tools become more sophisticated, companies increasingly look for ways to make AI suggestions appear trustworthy.

One method is connecting AI output to real-world expertise. Attaching recognizable names or professional voices can make recommendations feel more reliable to users.

Yet when those connections happen without permission, the strategy quickly raises ethical questions. Users may struggle to tell the difference between an authentic expert opinion and a machine-generated suggestion.

For content creators, reputation is one of their most valuable assets. Using their names without approval can undermine that trust.

The controversy also places pressure on leadership at Shishir Mehrotra and the broader company strategy around AI features.

Observers say transparency will be critical as writing tools continue evolving. Users increasingly rely on AI assistants not only for grammar corrections but also for rewriting, summarizing, and content generation.

When AI tools influence what people write, understanding where advice comes from becomes essential.

That means clear disclosure about how expert voices are used and whether those individuals actually contributed to the recommendation.

The situation surrounding Grammarly using authors’ identities may become an early example of the growing pains facing AI writing platforms. As technology integrates deeper into everyday communication, ethical design decisions matter more than ever.

Experts expect future AI systems to include stronger consent policies and clearer attribution rules. Companies will likely face increasing pressure to ensure individuals have control over how their names, expertise, and reputations appear in AI products.

For now, the opt-out option offers a temporary solution. But the broader conversation about AI transparency and author rights is only beginning—and the outcome could reshape how writing assistants operate in the years ahead.

Comment