Grammarly is facing growing criticism after users discovered that its AI writing tool may reference real experts’ identities in suggestions without clear permission. The controversy centers on a feature called “Expert Review,” where the platform claims its recommendations are inspired by subject-matter experts. Questions about transparency, consent, and AI ethics have quickly surfaced as more people learn how the feature works.

Many users rely on Grammarly’s AI for editing emails, essays, and professional documents. However, concerns are emerging about whether the platform accurately represents expert involvement in its AI-generated suggestions. The issue highlights a broader debate about how AI tools use names, reputations, and identities to build trust with users.

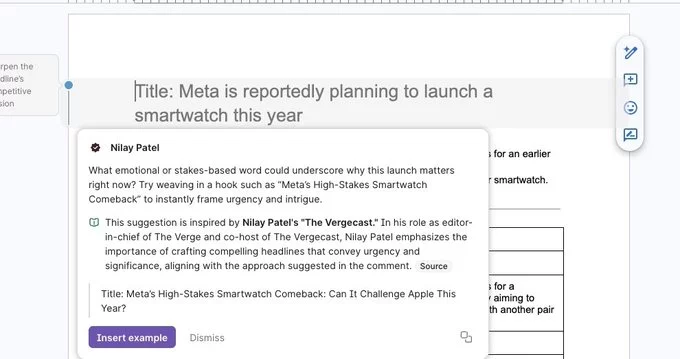

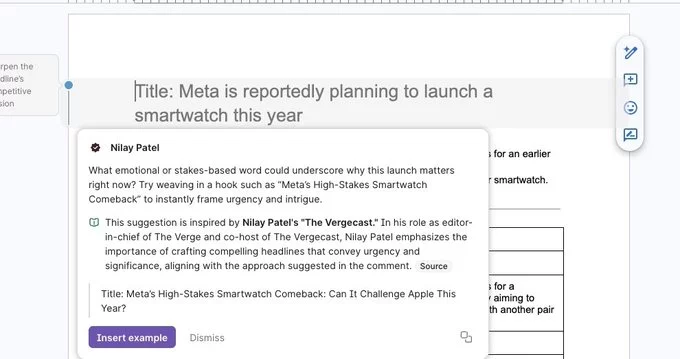

Grammarly’s newer AI-powered writing suggestions are designed to go beyond grammar corrections. The “Expert Review” system claims to provide advice modeled after guidance from specialists in areas like journalism, business communication, and academic writing.

When the feature triggers, users may see suggestions that appear to be influenced by specific professionals. These recommendations aim to make writing more persuasive, structured, or audience-friendly. For example, the AI might suggest rewriting a sentence based on communication principles associated with industry experts.

On the surface, this approach helps position Grammarly as a tool that combines AI with human expertise. The goal is to make users feel confident that suggestions reflect real-world professional standards rather than purely algorithmic guesses.

However, the way those expert identities are referenced has sparked debate.

Critics argue that associating AI suggestions with real individuals can be misleading if those experts did not directly approve or participate in the feature. When a writing tool implies that advice comes from a particular expert perspective, users may assume that person helped develop or endorse the AI.

That assumption can create confusion about authorship and responsibility. If an expert’s name or identity is used as inspiration for AI-generated advice, people naturally expect some level of consent or involvement.

Transparency is a key issue here. AI tools increasingly rely on trust, especially when they claim to incorporate professional expertise. Without clear disclosure about how those identities are used, some critics say the feature risks blurring the line between genuine expert guidance and automated suggestion.

The situation reflects a larger ethical challenge facing the AI industry: balancing marketing claims with honest representation of how models actually work.

AI writing assistants have become essential productivity tools for students, professionals, and content creators. Because of that influence, transparency around how recommendations are generated is becoming increasingly important.

When a platform suggests edits based on “expert insight,” users may change how they write, communicate, or present ideas. Those decisions can affect business proposals, academic work, and even job applications.

Clear explanations about how AI models are trained and how expert frameworks are incorporated help maintain user trust. Without that clarity, even helpful features can raise doubts about accuracy and authenticity.

The debate surrounding Grammarly’s “Expert Review” feature highlights the importance of responsible AI design. Companies building AI assistants must ensure that claims about expertise are both truthful and clearly explained.

This controversy arrives at a time when AI companies are under increasing scrutiny for how they market their technology. As artificial intelligence becomes more advanced, branding strategies that highlight human expertise are becoming more common.

Linking AI tools to well-known professional voices can make products feel more credible. But if those connections are unclear or exaggerated, the strategy can backfire.

Users today are more aware of how AI systems operate. Many expect straightforward explanations about data sources, training methods, and the role of human input in shaping AI output.

For Grammarly, addressing these concerns will likely involve clarifying how the “Expert Review” feature works and how expert identities are incorporated into its recommendations.

Beyond one product feature, the situation points to a larger conversation about identity in AI systems. As tools grow more sophisticated, developers are experimenting with ways to mimic human expertise and voice.

That innovation can make AI more helpful and engaging. At the same time, it raises ethical questions about consent, attribution, and representation.

Trust remains the foundation of successful AI products. Platforms that rely on transparency, clear communication, and responsible use of identities are more likely to maintain user confidence as AI continues to evolve.

For users and developers alike, the Grammarly debate serves as a reminder that powerful AI tools must also be accountable and transparent.

Comment