Questions about whether AI tools can imitate real writers without consent have sparked a major debate across the tech world. Grammarly has now responded to growing criticism by shutting down its controversial “Expert Review” feature, which generated writing suggestions inspired by real experts. The company says it will redesign the system and give professionals full control over how — or if — their voices are used in future AI tools.

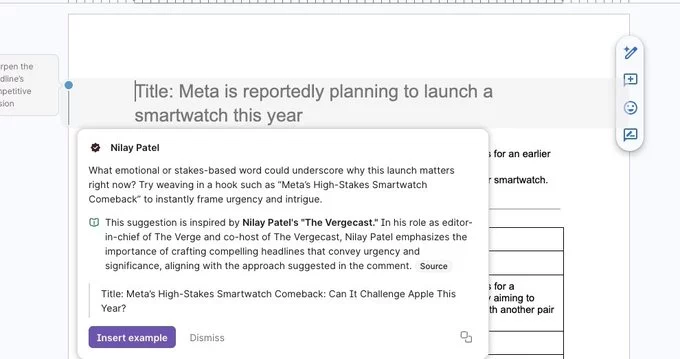

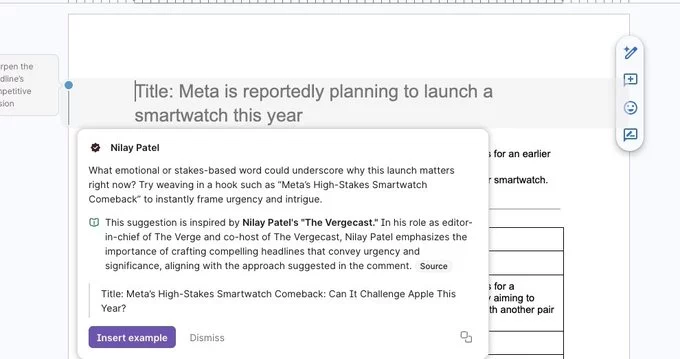

Grammarly confirmed it has disabled the Expert Review AI feature after receiving strong criticism from writers and journalists. The tool originally generated writing suggestions that claimed to be “inspired by” well-known experts and public figures.

Many professionals argued the feature created AI-generated advice that appeared to mimic their voice without permission. Critics said this blurred the line between genuine expertise and machine-generated imitation.

In response, Grammarly acknowledged the concerns and decided to pause the feature entirely. The company now plans to rethink how expert knowledge can be used in AI-powered writing tools while respecting the rights of creators.

The backlash began when writers discovered that Grammarly’s AI suggestions were linked to their names and writing styles. Even though the system relied on publicly available information, experts argued that it still created the impression that they had endorsed or participated in the feature.

For many professionals, the issue wasn’t just about data usage — it was about identity and authorship. When AI systems appear to replicate someone’s writing voice, readers may assume the person approved or contributed to the content.

Critics say this raises larger questions about how artificial intelligence companies use publicly available material. As generative AI becomes more powerful, protecting the identity and reputation of creators has become an urgent topic across the technology industry.

Following the criticism, Grammarly leadership issued a public apology and acknowledged that the company had “missed the mark.” Executives stated that the original goal of the feature was to help users discover influential perspectives and improve their writing.

However, feedback from experts made it clear the system created unintended problems. As a result, Grammarly is now redesigning the concept behind Expert Review.

The company says future versions will prioritize clear consent and participation from experts. Professionals will have the ability to choose whether they want their knowledge represented in AI-generated suggestions.

Grammarly’s leadership says the next version of the feature will give experts far more control. Instead of automatically referencing public figures, the system may allow experts to opt in and actively shape how their knowledge is used.

This approach could also allow experts to control how their brand or professional reputation appears within AI-generated writing tools. In some cases, creators may even be able to define how their expertise is presented or monetized.

The company believes this new model could create a healthier relationship between AI platforms and subject-matter experts.

Grammarly’s decision comes at a time when many AI companies face increasing scrutiny over how they train and deploy generative models. Writers, artists, and journalists have raised concerns that their work is being used to train AI systems without clear consent.

Several legal challenges have already emerged across the tech sector, with creators arguing that AI tools should not replicate their style, voice, or intellectual identity without permission.

As a result, companies are being pushed to create more transparent and ethical AI practices. This includes clearer policies around training data, identity usage, and how AI-generated content references real people.

Grammarly’s decision to disable the Expert Review feature highlights a turning point for AI-powered writing platforms. While users still want smart editing and writing assistance, creators want stronger protections for their work and identity.

Future AI tools may rely more heavily on voluntary collaboration with experts rather than automated imitation. This shift could lead to new models where professionals directly contribute knowledge to AI systems while maintaining control over how it is used.

For now, Grammarly’s move signals that the tech industry is beginning to listen to the concerns of creators. As AI continues reshaping digital writing, finding the right balance between innovation and ethical responsibility will remain one of the biggest challenges ahead.

Comment