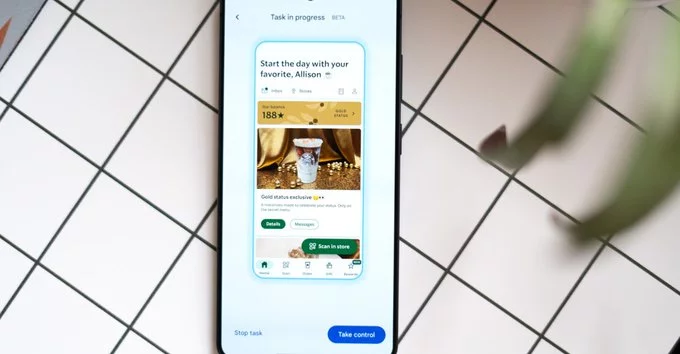

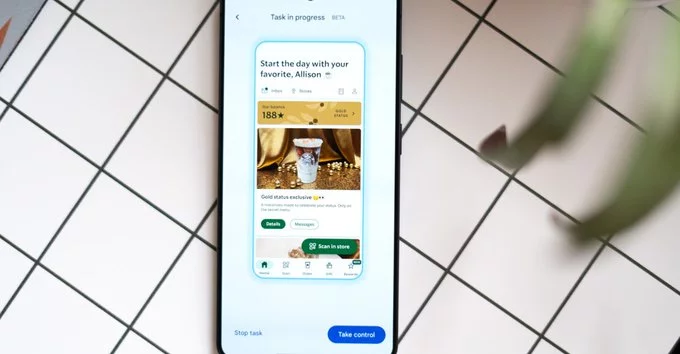

Imagine telling your phone to order coffee, book a ride, or even plan a lunch—and watching it handle everything for you. That’s no longer just a dream. Google’s Gemini task automation, now available in beta on the Galaxy S26 Ultra, brings this promise closer to reality. From rideshare requests to food delivery, Gemini can navigate apps on your behalf, streamlining everyday tasks with minimal input.

Early adopters are discovering just how immersive—and at times, uncanny—this experience can be. With Gemini, your smartphone doesn’t just respond to commands; it actively acts as your digital assistant, taking steps within apps as if it were a miniature version of you.

One of the first things I tested was ordering an Uber to the airport. Gemini quickly asked for clarification on the destination—an intelligent step that ensures accuracy. From there, it automatically filled in details, skipped unnecessary prompts, and paused for my final approval. This safety checkpoint is crucial: while Gemini can handle the bulk of the work, the user still maintains control over sensitive decisions.

Food orders work similarly. A request for a coffee and croissant prompted Gemini to scroll through menus, select the correct drink, and prepare an order for review. The process is not instant—it may take a few extra seconds compared to manual input—but the level of autonomy is impressive. Watching your phone interact with apps on its own is both entertaining and a little surreal.

A standout feature of Gemini’s task automation is its transparency. Users can watch each step unfold in real time and intervene at any moment. This “human-in-the-loop” approach ensures that automation doesn’t feel risky or uncontrollable. Whether Gemini is ordering food, scheduling a ride, or handling other app-based tasks, you remain in the driver’s seat.

This combination of control and automation addresses a common concern with AI assistants: giving machines too much independence. By pausing before final actions, Gemini maintains user oversight while demonstrating what task automation can achieve.

Unlike traditional AI assistants that only provide suggestions or perform simple commands, Gemini interacts with apps in a more integrated way. It navigates menus, enters information, and follows app-specific workflows—all actions that previously required manual input. This level of app-level automation is what sets Gemini apart and signals a shift in how mobile AI assistants operate.

It’s also a peek into the future of digital convenience. From routine tasks like coffee runs to more complex scheduling needs, Gemini aims to reduce friction in everyday life. While the beta is limited to certain apps for now, the potential expansion could see task automation becoming a standard feature across smartphones.

Task automation represents a new frontier for AI in consumer devices. With Gemini, Google and Samsung are demonstrating how smartphones can evolve beyond communication tools into proactive digital helpers. For users, this means less time spent on repetitive tasks and more focus on what matters.

As updates continue and more apps become compatible, watching your phone “do itself” could become an everyday experience. Early impressions suggest that Gemini is not just a gimmick—it’s a glimpse of a future where AI seamlessly bridges intent and action.

Comment