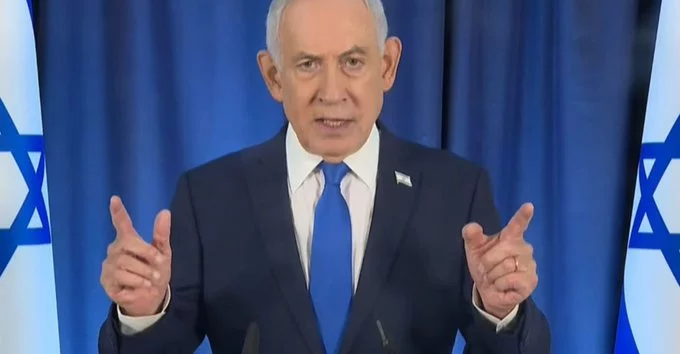

Rumors claiming Benjamin Netanyahu has been replaced by an AI-generated clone are spreading rapidly online. The speculation began after viral videos appeared to show unusual visual glitches, prompting questions about deepfakes and digital manipulation. Despite the buzz, there is no credible evidence supporting these claims. What this situation really highlights is a growing global concern: distinguishing reality from AI-generated content is becoming increasingly difficult in 2026.

The controversy began after a livestreamed press conference featuring Netanyahu circulated across social media. Viewers pointed to what looked like an extra finger on his hand, a common visual error associated with earlier AI-generated imagery. This detail quickly fueled theories suggesting the footage was fabricated.

As the clip gained traction, additional claims followed, including odd visual distortions like unnatural movements and strange object behavior. These moments were interpreted as “proof” of AI manipulation, even though such artifacts can also result from compression, lighting, or camera quality issues.

The speed at which the claims spread demonstrates how quickly misinformation can evolve when paired with emerging technologies.

Independent fact-checkers and digital analysts have reviewed the footage and found no evidence that it was AI-generated. Experts noted that the supposed “extra finger” could be explained by motion blur and video compression artifacts.

Another key detail is the length of the video itself. At nearly 40 minutes, it exceeds the typical capabilities of most current AI video generation tools, which still struggle with long, consistent sequences. This significantly weakens the argument that the footage is synthetic.

Ultimately, the claims lack technical backing, reinforcing that the viral theory is driven more by perception than proof.

In response to the growing speculation, Netanyahu released a new video on X aimed at directly addressing the rumors. Filmed casually in a coffee shop, the clip shows him interacting naturally and even asking the person recording to count his fingers.

This “proof-of-life” approach is becoming more common among public figures facing deepfake accusations. By presenting real-time, unscripted footage, leaders attempt to reassure audiences of their authenticity.

However, even these efforts are not always enough to convince skeptics, highlighting a deeper issue in the digital age.

Advancements in AI have made it possible to generate highly realistic videos, images, and audio clips. While this technology has positive uses in entertainment and accessibility, it also creates opportunities for misinformation.

As tools improve, distinguishing between real and synthetic content becomes harder for the average viewer. Small visual inconsistencies—once obvious signs of manipulation—are now subtle enough to spark debate rather than certainty.

This growing uncertainty is eroding public trust, especially when it involves political figures or global events.

The Netanyahu deepfake rumors are less about one individual and more about a broader shift in how people consume information. Visual evidence, once considered reliable, is no longer automatically trusted.

Social media platforms amplify this issue by allowing unverified content to spread quickly, often reaching millions before fact-checking can catch up. As a result, even false claims can leave a lasting impression.

Moving forward, digital literacy and critical thinking will play a crucial role in helping users navigate this evolving landscape. Until then, situations like this will continue to blur the line between reality and illusion, leaving audiences questioning what they see online.

Comment